TL;DR:

- AI transcription operates through a multi-step pipeline affecting accuracy and reliability.

- It offers significant time savings but requires human oversight for high-stakes accuracy.

- Choosing the right solution involves assessing industry-specific benchmarks, compliance, and post-processing features.

AI transcription tools can convert an hour of audio into text in just a few minutes, yet most professionals treat the technology as a simple black box. That assumption is costly. The reality is that AI transcription transforms audio and video content into text through a layered, multi-step pipeline where each stage affects final accuracy. Legal teams, medical practices, and enterprise operations all stand to gain enormously, but only when they understand what the technology actually does, where it excels, and where it still needs human judgment. This article walks you through all of it.

Table of Contents

- How AI-powered transcription works: The core pipeline

- Key benefits for professionals and enterprises

- Limitations and the need for human oversight

- Choosing and applying AI transcription: A buyer's checklist

- Why AI transcription is not a 'set-and-forget' solution

- Take the next step with AI transcription

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| End-to-end process | AI-powered transcription uses stepwise modeling to turn speech into text quickly and efficiently. |

| Major productivity boost | Professionals in law, medicine, and business save hours by automating transcription and extracting insights from conversations. |

| Human oversight required | For critical applications, especially legal and healthcare, human review remains essential for accuracy and compliance. |

| Choosing wisely | Organizations should evaluate AI transcription tools for accuracy, security, and compatibility before deployment. |

How AI-powered transcription works: The core pipeline

Now that you know why AI-powered transcription is so appealing, let's look under the hood at how it really works. The process is not a single step. It is a structured sequence of operations, and understanding each one helps you set realistic expectations and make better decisions about which tools to trust.

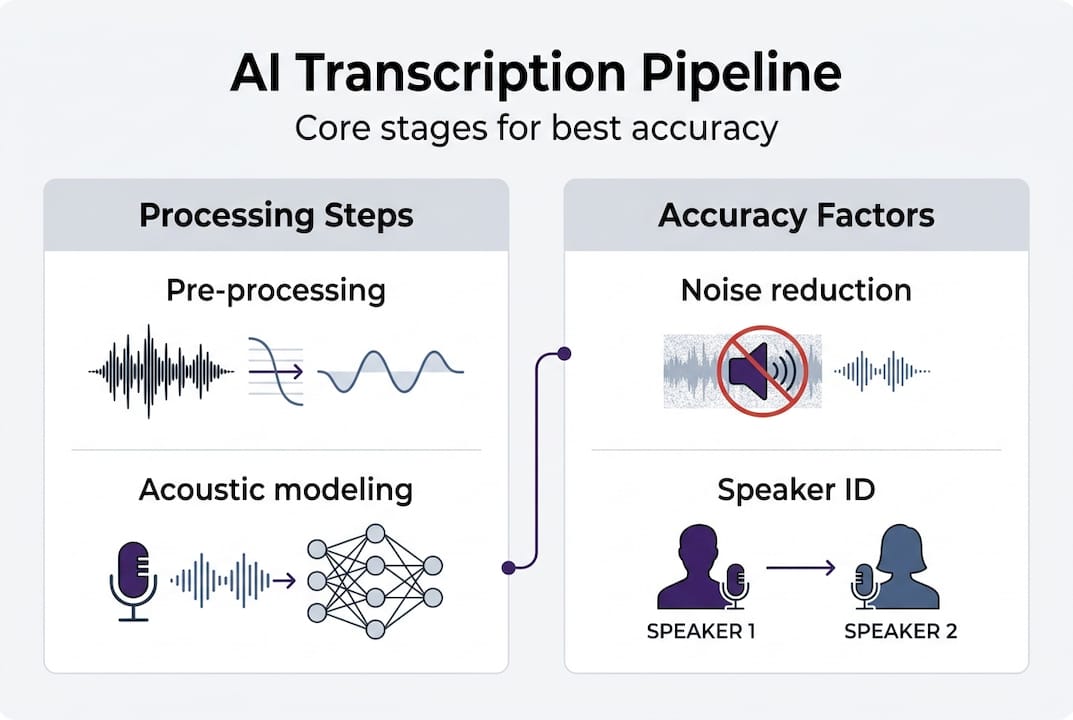

The multi-step transcription process follows this order:

- Audio pre-processing. Raw audio is cleaned and normalized. Background noise is filtered, volume levels are equalized, and silence is stripped. This step directly affects everything that follows. Poor audio quality at this stage compounds errors downstream.

- Feature extraction. The cleaned audio is converted into numerical representations, typically mel-frequency cepstral coefficients or spectrograms. These are the data structures the model actually reads, not the raw sound wave.

- Acoustic modeling. Deep neural networks, including transformer architectures like OpenAI's Whisper, map extracted features to probable phonemes and words. This is where the heavy computational lifting happens.

- Language model integration. A language model adds context and grammar. It resolves ambiguity between words that sound identical, like "their" versus "there," using surrounding sentence structure.

- Decoding and post-processing. The system selects the most probable word sequence and then applies punctuation, capitalization, speaker labels, and formatting to produce a readable transcript.

Here is a quick comparison of how each stage contributes to the final output:

| Pipeline stage | Primary function | Impact on accuracy |

|---|---|---|

| Pre-processing | Noise reduction, normalization | High: sets baseline quality |

| Feature extraction | Audio to numerical data | Medium: affects model input |

| Acoustic modeling | Maps features to words | Very high: core recognition |

| Language modeling | Adds grammar and context | High: resolves ambiguity |

| Post-processing | Formatting, speaker labels | Medium: affects usability |

For professionals exploring AI transcription in industry, this pipeline matters because each stage is a potential failure point. A noisy deposition recording, a doctor using rare clinical terminology, or a conference call with six speakers all stress different parts of the system. Knowing this helps you ask better questions when evaluating vendors.

Key benefits for professionals and enterprises

Understanding the core technology, what does all this mean in practice for professionals and large organizations? The short answer: significant time savings and better access to information buried inside audio and video files.

AI transcription transforms hours of meeting or deposition audio into searchable, analyzable text in a fraction of the manual time. That speed shift is not trivial. A paralegal who previously spent three hours transcribing a two-hour deposition can now review and verify an AI-generated transcript in under thirty minutes.

Here are the core benefits that matter most across professional sectors:

- Legal. Depositions, client calls, and court recordings become searchable documents. Attorneys can locate key statements instantly rather than scrubbing through audio files.

- Medical. Physicians dictate notes and receive structured, formatted documentation. Clinical workflows speed up, reducing the administrative burden that contributes to burnout.

- Corporate. Earnings calls, board meetings, and training sessions are automatically documented. Compliance teams gain a reliable audit trail without manual effort.

- Insight extraction. Advanced platforms layer analytics on top of transcripts, identifying sentiment, extracting named entities, and clustering topics. You stop just recording what was said and start understanding what it means.

- Multilingual support. Global teams working across languages benefit from models trained on diverse speech data, reducing the need for separate human translators for initial documentation.

Statistic callout: Manual transcription typically runs at a 4:1 ratio, meaning four hours of work for every one hour of audio. AI-powered systems flip that ratio dramatically, processing the same content in minutes.

Pro Tip: Do not evaluate an AI transcription tool solely on speed. Ask vendors for accuracy benchmarks on audio that matches your actual use case: your industry's vocabulary, typical recording conditions, and speaker count. A tool that scores 95% accuracy on clean studio audio may drop to 80% on a noisy conference call.

For organizations assessing transcription in legal and medical fields, the productivity case is strong. But productivity gains only hold when the output is reliable enough to act on.

Limitations and the need for human oversight

But even with these advances, AI-powered transcription is not perfect. Let's dig into the critical areas where humans remain essential.

IBM's own research acknowledges that speech recognition is not a solved problem. Probabilistic models have inherent limits, and those limits surface most painfully in exactly the environments where professionals need the highest accuracy.

Here is where current AI transcription consistently struggles:

| Challenge | Why it matters | Sectors most affected |

|---|---|---|

| Heavy accents and dialects | Models trained on limited data underperform | Legal, medical, global enterprise |

| Domain-specific jargon | Rare terms get misrecognized | Medical, legal, technical |

| Poor audio quality | Noise compounds errors at every stage | All sectors |

| Multiple overlapping speakers | Diarization fails with crosstalk | Corporate meetings, depositions |

| Regulatory compliance | HIPAA, GDPR require strict data handling | Medical, legal, finance |

"Probabilistic AI still struggles with nuance, confidentiality, and context, especially in high-stakes sectors." This is not a criticism of the technology. It is a design reality that every professional deploying these tools must account for.

Bias in training data is another underappreciated risk. Models trained predominantly on certain accents or speech patterns perform worse for speakers outside that demographic. In a legal context, a misrecognized name or misquoted testimony is not a minor inconvenience. It is a liability.

Pro Tip: For any transcript used in legal proceedings, medical records, or regulatory filings, build a mandatory human review step into your workflow before the document is finalized. Treat the AI output as a first draft, not a finished product.

Privacy is equally critical. Before uploading sensitive audio to any platform, verify that it meets AI accuracy limits and compliance requirements relevant to your sector. HIPAA-covered entities and organizations operating under GDPR face real consequences if data handling protocols are not verified in advance.

Choosing and applying AI transcription: A buyer's checklist

If you're ready to leverage AI transcription, here's how to choose and apply a solution that fits your unique professional needs.

Start with your evaluation criteria. Not all platforms are built for professional-grade work, and the gap between consumer tools and enterprise solutions is significant.

What to look for in an AI transcription solution:

- Accuracy benchmarks specific to your industry's vocabulary and audio conditions

- Speaker diarization that handles your typical meeting or recording size

- Domain-aware vocabularies for legal, medical, or technical terminology

- HIPAA and GDPR compliance certifications, not just claims

- Integration with your existing document management or workflow systems

- Export options that match your documentation standards

- Transparent pricing that scales with your actual usage

Once you have shortlisted vendors, follow a structured implementation approach:

- Run a pilot. Select a representative sample of your real audio content, not the cleanest recordings you have. Test accuracy on the material that actually challenges the system.

- Collect structured feedback. Have end users document specific error types: misrecognized terms, missed speaker changes, formatting inconsistencies. This data drives your vendor conversations.

- Refine and configure. Most enterprise platforms allow custom vocabulary lists and speaker profiles. Use pilot feedback to configure these before scaling.

- Scale with oversight. Roll out to broader teams while maintaining a review step for high-stakes outputs. Reduce oversight only as confidence in accuracy builds over time.

Post-processing features like smart punctuation, speaker diarization, and structured formatting are what separate a raw AI output from a document professionals can actually use. When evaluating an AI transcription solution, weight these features heavily. Raw word output with no structure creates its own manual workload.

Why AI transcription is not a 'set-and-forget' solution

Having outlined practical steps for adoption, let's drill into an expert perspective: why the promise of fully automatic transcription remains elusive, and why that should not discourage you.

The automation hype around AI transcription often skips a crucial detail. These systems are probabilistic, not deterministic. They make statistically informed guesses based on training data. In a corporate meeting discussing quarterly targets, a wrong word is annoying. In a medical record or a legal deposition, it can change meaning in ways that carry real consequences.

We have seen organizations deploy AI transcription with confidence, skip the review step to save time, and then discover errors in archived records months later. Correcting those records retroactively is far more expensive than building a review step into the original workflow.

The probabilistic limits of current models mean that nuance, confidentiality, and context remain genuinely hard problems. A physician's offhand comment captured in a recording, a confidential negotiation detail, a witness's hesitation: these carry meaning that a language model can miss or misrepresent.

The right mental model is not AI versus human. It is AI as a highly capable first-pass processor, and human expertise as the quality gate. Platforms built for AI transcription for professionals understand this and design their workflows accordingly. The future is hybrid, and the organizations that thrive will be the ones that embrace both the speed of AI and the judgment of skilled reviewers.

Take the next step with AI transcription

With this perspective in mind, professionals have more choices than ever when it comes to AI-powered transcription. The key is finding a platform built specifically for the accuracy and compliance demands of your sector.

AuroraNote AI transcription is designed exactly for this. It combines Whisper-based acoustic modeling with speaker diarization, domain-aware vocabularies, and smart punctuation to deliver enterprise-grade transcripts from the start. Beyond raw transcription, AuroraNote layers in executive summaries, topic clustering, sentiment detection, and entity extraction, turning your audio and video content into structured, searchable knowledge assets. Legal, medical, and corporate teams can move from raw recording to actionable insight faster than ever, without sacrificing accuracy or compliance. Explore what AuroraNote can do for your organization today.

Frequently asked questions

What is AI-powered transcription?

AI-powered transcription uses machine learning models to convert audio to text automatically through a multi-step pipeline that includes acoustic modeling, language modeling, and post-processing. It is significantly faster than manual transcription and increasingly accurate across a wide range of audio conditions.

Is AI transcription accurate enough for legal and medical documents?

AI can achieve high accuracy, but human oversight is essential in legal and medical contexts to catch errors in specialized terminology, ensure verbatim accuracy, and meet regulatory standards like HIPAA and court admissibility requirements.

Are AI transcription tools secure for sensitive information?

The best platforms offer HIPAA and GDPR compliance, but privacy and compliance verification is critical before uploading any sensitive audio. Always request documentation of security certifications and data handling practices from vendors before committing.

How fast can AI-powered transcription process audio files?

Most AI tools drastically reduce turnaround time compared to manual transcription, often processing an hour of audio in just a few minutes. This frees professionals to focus on review and analysis rather than typing.